AI Sniffer

Zero-Trust Enabled AI Discovery

The Cognome platform gives healthcare organizations complete visibility into AI across their environment, detecting Shadow AI, monitoring AI risk, and enabling continuous governance.

Standard Zero Trust Frameworks Fall Short of Securing AI

Every organization has AI running across its network that nobody authorized and nobody is watching. You can’t govern what you don’t know.

Third-Party Vendor AI Models

Unmanaged AI embedded in external software and workflows.

Internal AI Models

Custom-built AI systems operating without centralized governance.

Public AI Usage

ChatGPT, Claude, Gemini, and other GenAI tools used outside policy controls.

The exponential rise of Shadow AI workloads is creating a visibility gap across enterprise endpoints, networks, and edge environments.

Zero Trust Enabled AI Discovery and Governance

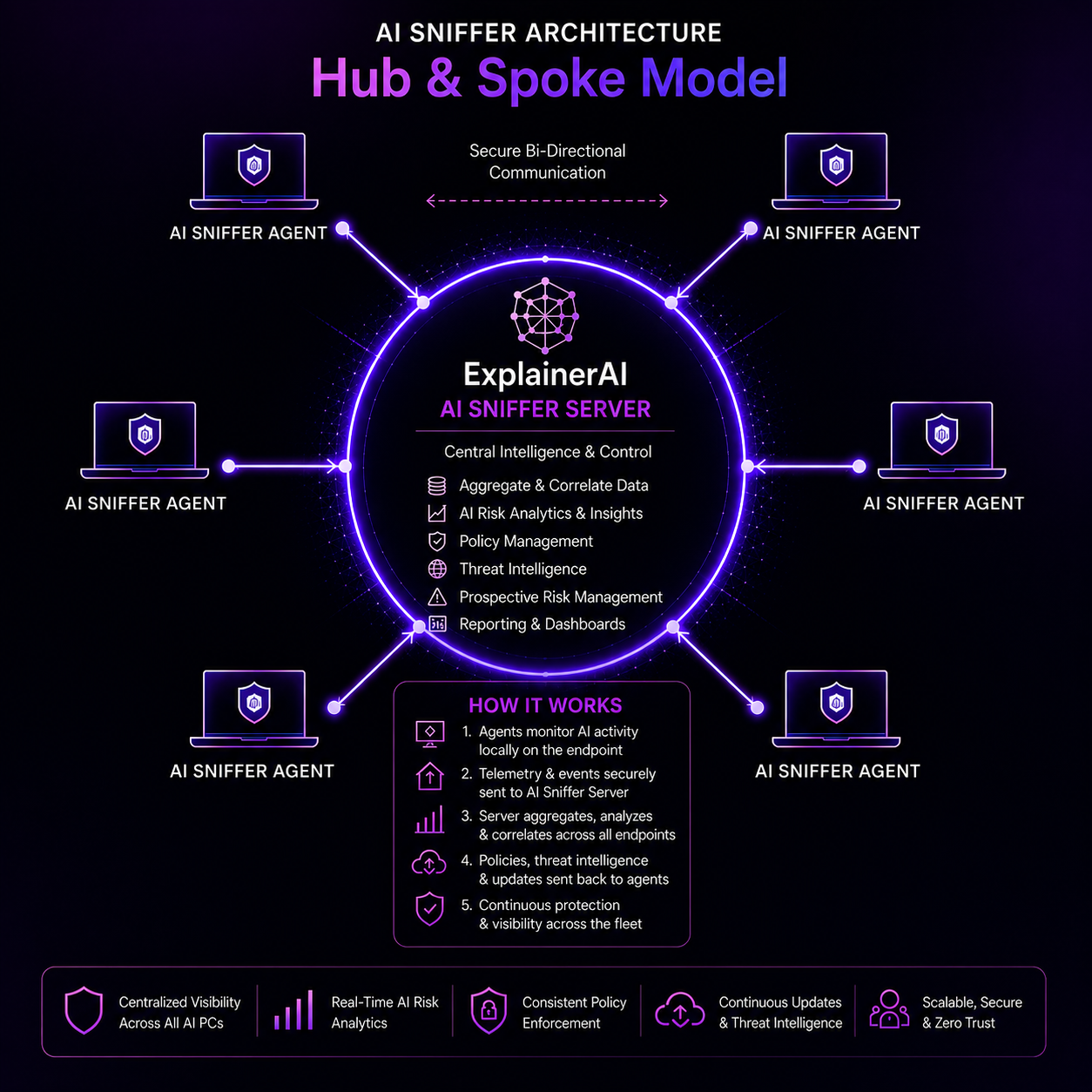

AI Sniffer continuously discovers AI across endpoints, networks and edge devices while ExplainerAI™ provides governance, monitoring, risk intelligence, centralized alerting and audit readiness.

AI Sniffer

- Discovers unknown AI models and Shadow AI usage

- Detects AI across endpoints, network infrastructure, and edge devices

- Integrates third-party and internally developed AI models

ExplainerAI™ Governance

- Real-time detection of hallucinations, PHI leaks, bias, and drift

- Automated alerting and AI failure detection

- Audit-ready lineage and traceability aligned to HIPAA and AI risk standards

Continuous AI Risk Management

Detect & Connect

Discovers unknown AI models and integrates any AI or ML system operating in your environment.

Continuous Monitoring

Monitors AI usage in real time for hallucinations, PHI leakage, unsafe outputs, and bias.

Failure Detection & Alerts

Identifies drift, degradation, unsafe behavior, and operational failures before they become incidents.

Audit-Ready Evidence

Creates lineage, traceability, and governance evidence aligned to HIPAA and AI risk frameworks.

Thought Leadership and Industry Insights

Explore Cognome’s latest research and executive perspectives on AI governance, healthcare security, and enterprise AI risk management.